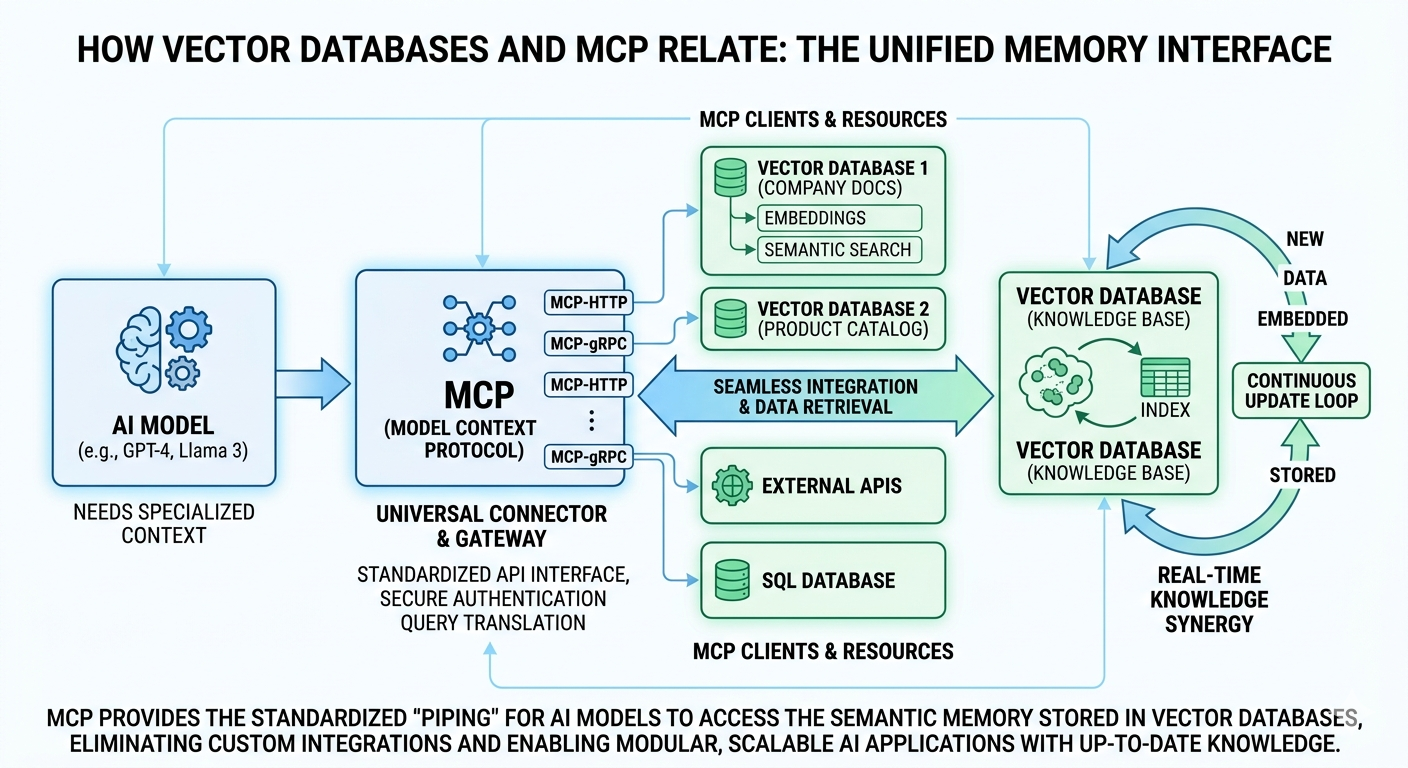

In the rapidly shifting landscape of modern artificial intelligence, the connection between vector databases and the Model Context Protocol, or MCP, represents a fundamental shift in how we grant LLMs a “memory” and a “voice.” To understand how they relate, one must first view the vector database as the massive, silent library of an organization’s specialized knowledge. These databases don’t store data in rows and columns like traditional systems; instead, they translate text, images, and documents into high-dimensional mathematical coordinates known as embeddings. This allows an AI to find information based on conceptual similarity rather than exact keyword matches, effectively giving the model a way to “look up” facts it wasn’t originally trained on. However, a library is only useful if there is a standardized way for a researcher to walk through the front door and request a specific book. This is precisely where the Model Context Protocol enters the frame as the universal interface.

The relationship between these two technologies is essentially one of storage meeting delivery. MCP acts as the open-standard bridge that allows AI models to seamlessly plug into various data sources without developers having to write custom, brittle integrations for every single database. When an AI agent needs to answer a complex query using private company data, MCP provides the secure, standardized “piping” that carries the request to the vector database and brings the relevant context back to the model. Without MCP, connecting an LLM to a vector database often requires complex “glue code” that is difficult to maintain; with MCP, the vector database becomes a modular tool that any compliant AI can instantly recognize and utilize.

Furthermore, this synergy solves the “stale knowledge” problem by creating a real-time feedback loop. As a vector database is updated with new documents or live data feeds, MCP ensures that the AI’s retrieval mechanism stays consistent and reliable across different platforms. This means a developer can swap out one vector database provider for another, or move their AI agent from one environment to a different one, and the underlying logic of how the model accesses its specialized context remains unchanged. By pairing the deep, semantic retrieval capabilities of vector databases with the interoperable framework of MCP, we are moving toward a world where AI isn’t just a static brain, but an active participant in a vast, interconnected ecosystem of information.

Leave a Reply